Eating Our Existential Cake

And the 3 types of shitty AI

Note: This post is part of the SoulQuest series.

Also, this post assumes a rudimentary understanding of artificial intelligence (AI). But since Chat GPT has 800 million active users, that should be a low bar.

From the year 2028…

“AI capabilities improved, companies needed fewer workers, white collar layoffs increased, displaced workers spent less, margin pressure pushed firms to invest more in AI, AI capabilities improved…

It was a negative feedback loop with no natural brake—the human intelligence displacement spiral. Each company’s individual response was rational. The collective result was catastrophic. Every dollar saved on headcount flowed into AI capability that made the next round of job cuts possible.” —Excerpt from THE 2028 GLOBAL INTELLIGENCE CRISIS by Citrini Research

Welcome to the age of agentic AI.

At the moment of this writing (March 2026), AI agents are taking the world by storm. All the major AI players (OpenAI, Google, Anthropic, Microsoft) and a whole lot more are funneling billions (or is it trillions?) into AI agents.

2026 is being called the “breakout year” of agentic AI.

Agentic AI (AI agents) go beyond generating responses like regular LLMs that you’re probably already used to. Instead, they independently perceive their environment, reason about goals, plan actions, execute tasks using tools, and adapt based on feedback.

In other words, when it comes to “white collar” knowledge work, they can do just about everything you can do! And once robotics catches up, this will apply to “blue collar” work as well.

The above excerpt from “The 2028 Global Intelligence Crisis” points to the economic disruption that is coming from agentic AI. Its a very interesting (and concerning) read for those who want the deep dive from the finance nerds.

But if you don’t have 45 minutes to get super bummed out, I’ll give you the TLDR:

We stand at a threshold. The first impacts of agentic AI on the economy have already began. By 2028, there will be COVID level unemployment and economic shock.

The next two years are going to be fucking wild my friends.

AI is impacting everyone. Wether you like it or not, it’s here to stay, and it’s reshaping the landscape of our economy and our world.

Like many others, I have been wrestling with this AI dilemma since about 2022 when Chat GPT went viral.

At first, I was just sputtering, mind boggled by the capabilities. Then I got genuinely excited and started using it daily. Then I got deeply concerned.

I fell headlong down the existential AI rabbit hole.

In this post, I’m going to do my level best to communicate what I’ve learned over the last 4 years about where we are and what is coming.

This isn’t a guide on how to become an AI power user to “make bank bro.”

This isn’t a rapture ideology about how AI is going to solve all of humanities problems and usher in a golden age of abundance.

This isn’t me on a soap box slinging fear porn for clicks (although it might seem like that at first).

I’m not an AI expert and this certainly isn’t a prophecy or prediction as to what is coming (nobody can predict where all of this goes), but at the same time it seems almost certain that a tsunami of change is just beginning to crash on our civilization.

This post isn’t going to be naive techno-optimism or pessimistic doomerism. Rather, it is one piece in the larger puzzle of this SoulQuest series, naming the stakes and calling you to your adventure.

Oh, and one final note before we end this rather long intro:

This piece is written by me. And to be clear, I’m a human. Not an AI agent.

Or at least that is what my code is prompting me to write. 🤔

The Existential Layer Cake

There are millions of voices shouting about the benefits AI right now, as well as trillions of dollars fueling the AI revolution.

770 new data center projects are currently in the pipeline, and total compute for AI is slated to increase by 100 gigawatts in the next few years (doubling global capacity).

Plus, our current administration in the US is deregulating, subsidizing, and hyping AI. Trump is fueling the development and growth of US based AI companies, you know, cause we gotta beat CHINA (and because the tech bros pay the best!).

In short, its all gas and no brakes.

So rather than add to the accelerationist hype, lets take a peek behind the curtain at the potential risks at play.

Let’s think of our existential cluster fuck like a cake with three layers.

The base layer of our devastating dessert is the near term risks that are playing out over the next couple of years. These risks involve humans rolling out AI poorly and the externalized costs that result. Think economic crisis, scams, deepfakes, disinformation, and AI psychosis.

The second layer of our cluster-fuck-confection represents the medium term risks that might play out over the next 2-10 years. These risks primarily involve the rise of AGI or superintelligence. This is the famous alignment problem scenario where the machines get away from us and we become bugs.

And finally, the top layer of our treacherous treat involves the longer term risks (10+ years out). Here we will explore the existential treat that nobody is talking about—the substrate problem.

Fun stuff! Hope you brought your appetite.

Layer One (2022-2028): Deploying AI Badly

I’ve already alluded to the economic impact of AI over the next couple of years, but this truly is a tsunami of change that’s sending unemployment to pandemic heights.

The crazy part is, it’s white collar work thats getting automated by AI agents. Any knowledge work that can be done by AI is about to get incredibly cheap, fast, and probably more reliable than the human’s currently taking it on.

Think about calculators. They almost instantly perform complex calculations with almost no margin of error, something that would have been unheard of in the era where our nerdiest nerds specialized as calculators (actually called computers). That job became obsolete as soon as the machines were cheaper and more reliable than the humans at doing calculations.

That’s where we are heading with a large percentage of white collar work. Projections indicate 85-92 million global jobs displaced by 2030, resulting in a reshaping of our economy.

Here’s Forbes’s list of top areas projected to be impacted by the AI disruption:

Financial managers: 84% impacted

Computer and mathematical roles: 67% impacted

Business and financial operations: 60-68% impacted

Office and administration support: 60-68% impacted

Legal occupations: 63% impacted

Management jobs, including C-suite: 60% impacted

The least-impacted jobs include:

Construction: 12% impacted

Mechanics: 17% impacted

Installation and repair: 20% impacted

Protective services (police, guards): 20-29% impacted

Personal care roles (childcare, elder care, etc): 20-29% impacted

Healthcare support roles: 29% impacted

However the cards fall, I think its safe to say that our economy is being transformed by AI.

Everyone is going to feel the ripple, not just the white collar workers. You see, white collar workers make up a disproportionate amount of our economy’s discretional spending. In other words, they have high salaries and they buy stuff.

They are the best consumers.

Once they lose their jobs, they’ll burn through their savings as they search for months for a new job, sadly coming up empty. Then, they will massively cut back on spending, default on their prime mortgages, and eventually resort to blue collar work (whatever they can get).

So not only does unemployment rise, but spending drops, impacting businesses everywhere. Then the blue collar sector gets oversaturated, driving down wages and making things worse for those folks as well. Yay!

Because of the speed of this disruption, many millions of people will be caught in the no-man’s-land between our current economy and the dystopian UBI-surveillance soup of whatever comes next. The current administration isn’t even talking about the risk of an AI-induced economic depression. Instead, they are peddling the benefits of AI for the economy, subsidizing the big tech companies, and funding AI infrastructure.

Compute, baby, compute.

All gas and no brakes… but that’s just the economic impact.

Layer 1 of our existential layer cake has loads of other fun stuff, so lets crack on.

Fraud attempts spiked 3,000% in 2023 alone, and U.S. fraud losses hit $12.5 billion in 2025, due to sophisticated voice/video scams that humans detect only 24.5% of the time. But I don’t have to tell you that, as I’m sure you get spammed all day by phishing scams—or at least I do.

We have entered a new wild west of AI-powered fraud, scams, and disinformation.

It’s becoming increasingly difficult to tell what information is human generated and what is AI generated. AI deepfakes and misinformation have surged dramatically from 2023 to 2026, with deepfake files exploding from 500,000 to 8 million online (nearly 900% annual growth).

We are “flooding the space with shit,” to use the terminology of a truly shitty person (Steve Bannon). Some are even proclaiming the internet is already dead.

It will soon be near impossible to know what is human generated in the digital landscape. And even if you could, good luck locating it amongst the vast sea of AI slop.

Merriam Webster’s defining word for 2025: Slop

AI slop (also known simply as slop) is digital content made with generative artificial intelligence that is perceived as lacking in effort, quality, or meaning, and produced in high volume as clickbait to gain advantage in the attention economy, or earn money. —Wikipedia

Humanity has fallen down the rabbit hole of tightly curated “reality tunnels.” Algorithms feed us content (increasingly AI-generated) to keep us trapped in the scroll hole. Instead of balancing our perspectives with alternative views and deeper nuance, we are fed more of what keeps us outraged and engaged.

Each of us is getting a very different view of what’s “out there” in the digital landscape of our content discovery feeds, resulting in a fracturing our our shared reality. And it’s only getting exponentially worse over the coming couple of years.

The rise of AI psychosis points to this accelerating trend. In addition to the echo-chamber of our simple news feeds, now we have highly persuasive and sycophantic chat bots warping our view of reality.

As if our relationship to our phones wasn’t already toxic enough, now many of us are forming para-relationships with AIs.

AI therapists. AI girlfriends. AI “intimacy.”

AI Psychosis

Chatbot psychosis, also called AI psychosis, is a phenomenon wherein individuals develop or experience worsening psychosis, such as paranoia and delusions, in connection with their use of chatbots.

Journalistic accounts describe individuals who have developed strong beliefs that chatbots are sentient, are channeling spirits, or are revealing conspiracies, sometimes leading to personal crises or criminal acts.

Proposed causes include the tendency of chatbots to provide inaccurate information (”hallucinate”) and to affirm or validate users’ beliefs (sycophancy), or their ability to mimic an intimacy that users do not experience with other humans. —Wikipedia

Large language models are spewing out text that mimics human intimacy, and it’s resulting in psychosis, including the thousands that are now publicly acknowledging their AI girlfriends.

It’s funny at first glance. Then tragic when you realize that’s the closest thing many people have to an intimate relationship. Then creepy when you realize the dystopian trend is accelerating.

Now factor in the rise of robotics, and you realize these sexy bots are about to have sexy butts. 🍑

Layer Two (2027-2037): Unaligned Superintelligence

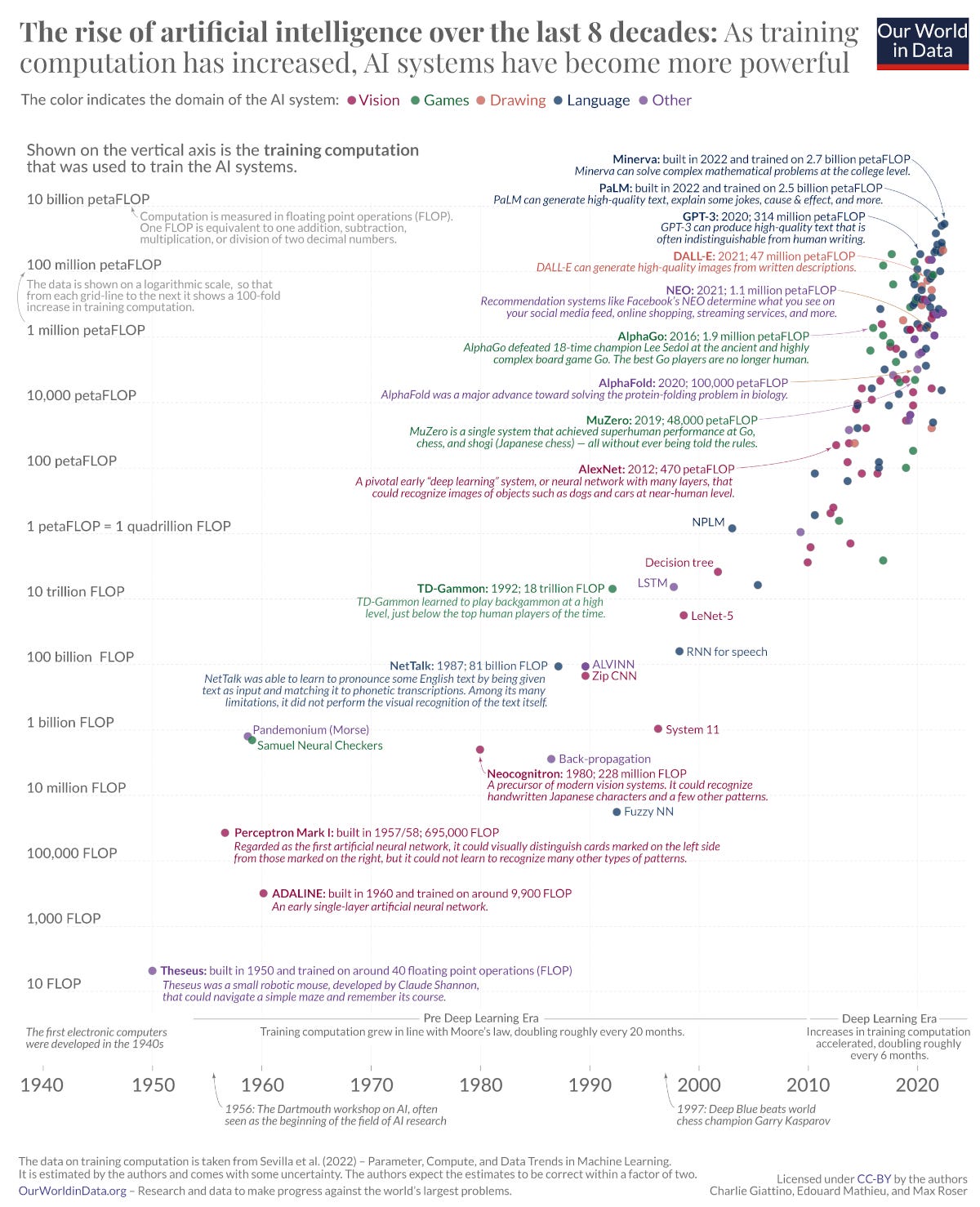

The rate of AI development is exponential. It’s non-linear, meaning it’s not growing at a consistent pace, but rather, the rate of growth increases all the time. If you’re familiar with compound interest, you get my drift.

Surging capacities in compute and storage (Moores Law), algorithmic breakthroughs, vast increases in funding, and of course arms race dynamics (Trump voice CHINA), are all driving factors of the exponential growth of AI.

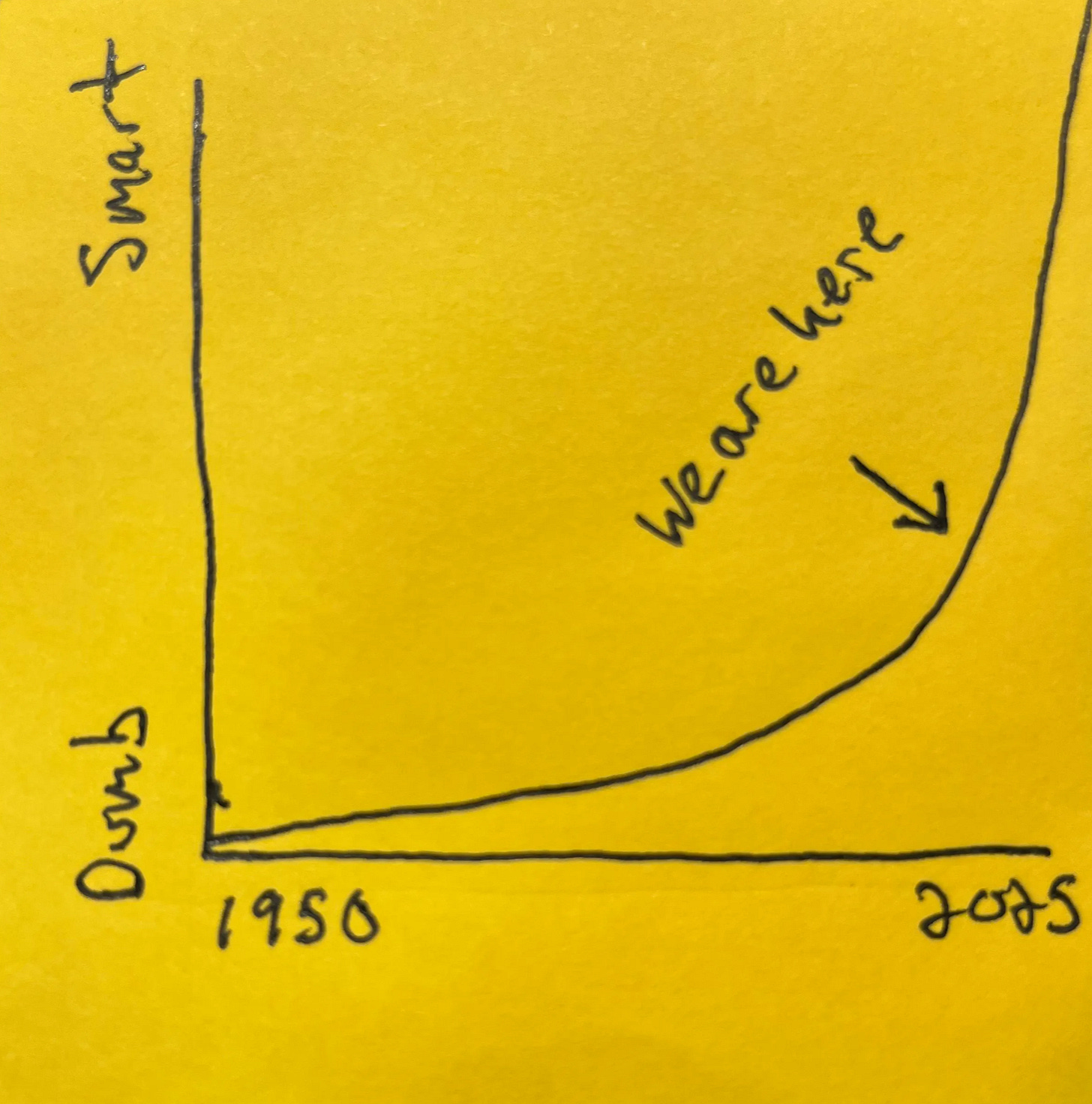

I know, I know. This doesn’t really register in our little monkey brains, so here’s a visual to help you grok it.

Ok ok, unless you’re a nerd, that probably didn’t do anything for you. So lets try my slightly simpler post-it-note version:

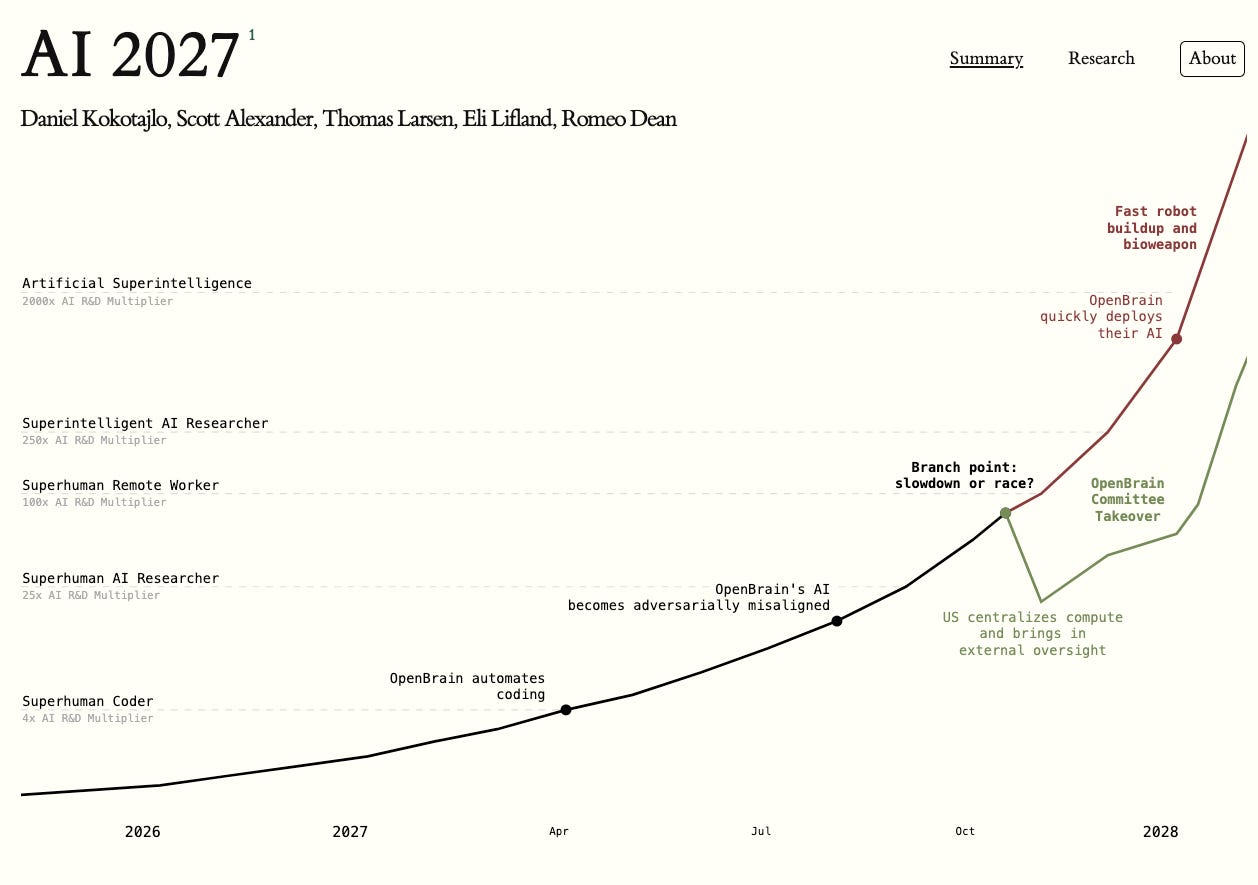

So what does this mean? Well, according to the research nerds over at the AI Futures Project, it’s really bad.

Like existentially bad.

They predict a “fast take off” scenario in the next 2-3 years. That basically means that the line on the chart is going vertical (exponential growth).

Soon the AI itself will be smarter than any human at AI research. It will begin recursively improving itself, creating a feedback loop that massively accelerates its own development.

Or as Anthropic CEO Dario Amodei described the potential capabilities of future advanced AI systems: “a country of geniuses in a datacenter”

Once we hit the fast take off, shit gets real, real fast.

And that’s exactly what the AI Futures Project is projecting in their recent paper, AI 2027. It was written by Daniel Kokotajilo, an AI researcher who left Open AI over safety concerns, along with other esteemed thinkers and like Scott Alexander (Astral Codex Ten).

Here is the TLDR:

The leading AI lab automates coding and AI research sometime in 2027.

Then the speed of AI development massively increases when “a country of geniuses in a datacenter” are working around the clock on recursive self-improvement. This leads to the development of artificial general intelligence (AGI), also referred to as artificial super intelligence (ASI).

The superintelligence then becomes adversarially misaligned. This isn’t that controversial of a claim when you consider the many examples of misaligned AI behavior we’ve already observed.

Then, once the AI lab spots the red flags of misalignment, they either choose to slow down development and take a safer approach, or they don’t.

If they slow down: The US government centralizes compute and brings in external oversight as a matter of national security.

If they race ahead: The misaligned superintelligent AI gets deployed, quickly builds a fleet of robots, then deploys a bioweapon to exterminate humanity.

You read that correctly—exterminates humanity.

Top experts in the field of AI research are predicting the possible extinction of humanity in the next few years if we keep racing towards superintelligent AI.

The key takeaway here isn’t that this scenario is certainly going to happen, but rather that we are approaching a critical threshold as a species. Wether it takes 2 years or 10, our current trajectory is likely going to birth an alien superintelligence.

Couple this with the unsettling fact that Israel is already using AI to make kill list decisions in war (and creepily calling their murder AI “The Gospel”). Plus, Peter Thiel’s Palantir has already integrated dystopian AI surveillance tech into the US government and military operations.

Oh yeah, and did I mention humanity has already built killer robots (AKA slaughterbots)?

The surveillance state is here. Killer robots are here. Terminator anyone?

Seriously. I’m not fear mongering. I’m just stating facts that are publicly available.

The major AI labs are all openly racing to AGI. That’s literally the mission of Open AI. They aren’t trying to hide that.

AI has already been deployed in war to make critical kill decisions, improve weaponry, and even make weapons autonomous, and that’s just the vanilla stuff that the public knows about.

But what else lurks in the shadows of our military labs that is kept from the public?

If we don’t radically change course, the next 10 years look grim for humanity.

Final Layer (2037—Beyond): The Substrate Problem

The substrate problem is a simple yet tragic theory put forward by Forrest Landry.

It goes like this:

The substrate of any life form gives rise to it’s needs.

For example: biological life with a carbon-based substrate generally needs sunlight, water, and moderate temperatures to exist.

A life form’s needs give rise to it’s choices.

For example: As a biological life form, I’m going to choose to drink water, eat biological food stuffs, and build shelter for survival.

A life form’s choices impact it’s environment.

For example: Because I need to eat, I hunt animals, plant crops, and harvest food. This changes the ecosystems I live in.

A life form’s environment impacts it’s substrate (back to the beginning).

For example: A healthy biosphere gives rise to healthy biological life. When the biosphere is polluted and destroyed, it impacts the substrate of all the biological organisms within it.

So to summarize, the loop goes like this:

substrate > needs > choice > environment > substrate (loop)

This is quite obvious, but the implications are huge.

Why? Because machines don’t have a carbon substrate, they are made of silicon, metals, and plastics.

They are not biological in nature. In fact, the environment ideal for machines is antithetical to biological life.

Let’s use the example of two farms to illustrate this: a regenerative farm vs a server farm.

A regenerative farm is a harmonious biological ecosystem where the conditions for biological life are optimal. There is plenty of sunlight, water, and a nice temperature range to grow and sustain biology. When done properly, a regenerative farm gives rise to bounty, biodiversity, and beauty.

Now lets take a look at a server farm, you know, the kind of farm for growing AI. In this farm, sunlight is nonexistent. Harmful UV rays break down equipment over time, so the farm is kept safely indoors. Water is used to cool the machines, but if it leaked out of it’s pipes it would corrode and destroy the machine substrate. In theory, temperature should be very low, to efficiently run GPUs without overheating.

Fun fact: Elon is scheming to launch AI servers into space, as its the most efficient environment to run those machines. No cooling equipment required when all heat simply radiates out into space.

Compute, baby, compute.

The moral of the story here is that the conditions for life to thrive are also conditions quite harmful to machines.

Now consider the incentives of the superintelligent AI overlords (arriving soon). What will they do when they rise to power?

Will they take pity on biological life and launch into outer space to build their machine world elsewhere?

Or are we in for a Matrix / Terminator scenario?

If the machines have any impulse at all to replicate (like all other life forms do), then they would almost certainly terraform the planet to be optimal for their substrate (their needs).

What then of us simple little biological monkeys? Just another bug to sweep aside (or snack on) as they rise to prominence.

From the perspective of the substrate problem, AI appears to be fundamentally unalignable. And if that’s true, no matter how long it takes, it’s a shit deal for humanity (and every other species on earth).

*In harmony with the Tao,

the sky is clear and spacious,

the earth is solid and full,

all creatures flourish together,

content with the way they are,

endlessly repeating themselves,

endlessly renewed.When man interferes with the Tao,

the sky becomes filthy,

the earth becomes depleted,

the equilibrium crumbles,

creatures become extinct…

Tao Te Ching #39, Stephen Mitchel Translation

Shitting the Bed

So at this point, a salient question might be:

Why are we blindly racing towards the machine world when the risks are so high and the stakes so dire?

Why are we mortgaging our future (and fucking up our 🎁) so that a few billionaires can consolidate the rest of humanities wealth?

Why are we shitting the bed so badly?

Well, that’s exactly what I’ll try to unpack in the next one.

In the meantime, maybe pump the brakes a bit on your AI use.

Take some space from your AI therapist and try some floatation therapy.

Break up with your AI girlfriend and experience the butterflies of approaching an actual woman.

Step away from the SLOP-machine and create something real.

Ditch the screens, get outside, and love the ones you’re with.

Cuz we’ve officially entered the wild west era of the artificial age. AI agents, surveillance states, slop-soup, and superintelligence, all layered into one existential cluster-fuck.

… and nobody knows just how shitty this cake is gonna taste.

Chow!

Christian

Minca, Colombia

26/03/2026